This is a gemini-2.5-flash translation of a Chinese article.

It has NOT been vetted for errors. You should have the original article open in a parallel tab at all times.

By Su Jianlin | 2018-10-07 | 180377 Readers

Foreword#

Last year, I wrote an introductory article on WGAN-GP, 《The Art of Mutual Opposition: From Zero to WGAN-GP》, which mentioned adding a Lipschitz constraint (hereinafter referred to as “L-constraint”) to WGAN’s discriminator through gradient penalty. A few days ago, while musing, I thought of WGAN again and always felt that WGAN’s gradient penalty was not elegant enough. Later, I also heard that WGAN is tricky in conditional generation (because random interpolation between different classes starts to get messy…), so I began to ponder whether a new solution could be devised to add an L-constraint to the discriminator.

After working in isolation for a few days, I found that what I came up with had already been done by others. Indeed, if you can think of it, someone else has probably already done it. It is mainly covered in these two papers: 《Spectral Norm Regularization for Improving the Generalizability of Deep Learning》 and 《Spectral Normalization for Generative Adversarial Networks》.

So, this article will, following my own line of thought, provide a simple introduction to L-constraint related topics. Note that the main topic of this article is the L-constraint, not just WGAN. It can be applied in generative models as well as in general supervised learning.

L-constraint and Generalization#

Sensitivity to Perturbations#

Let the input be $x$, the output be $y$, the model be $f$, and the model parameters be $w$. We denote it as:

$$ \begin{equation}y = f_w(x)\end{equation} $$Many times, we hope to obtain a “robust” model. What does robustness mean? Generally, it has two meanings: first, stability to parameter perturbations, for example, whether the model can still achieve similar results after becoming $f_{w+\Delta w}(x)$? In dynamic systems, one also needs to consider whether the model can eventually return to $f_w(x)$; second, stability to input perturbations, for example, if the input changes from $x$ to $x+\Delta x$, whether $f_w(x+\Delta x)$ can provide similar prediction results. Readers might have heard of “adversarial attack samples” in deep learning models, where, for instance, changing just one pixel in an image leads to a completely different classification result. This is an example of a model being overly sensitive to input.

L-constraint#

Therefore, most of the time we hope that the model is insensitive to input perturbations, which usually improves the model’s generalization performance. That is, we hope that when $\Vert x_1 - x_2 \Vert$ is small,

$$ \begin{equation}\Vert f_w(x_1) - f_w(x_2)\Vert\end{equation} $$is also as small as possible. Of course, what “as small as possible” exactly means, no one can say for sure. Thus, Lipschitz proposed a more specific constraint, which is that there exists some constant $C$ (which depends only on the parameters, not on the input), such that the following equation always holds:

$$ \begin{equation}\Vert f_w(x_1) - f_w(x_2)\Vert\leq C(w)\cdot \Vert x_1 - x_2 \Vert\end{equation} $$That is, we hope the entire model is “controlled” by a linear function. This is the L-constraint.

In other words, here we consider a model that satisfies the L-constraint to be a good model. Furthermore, for a specific model, we hope to estimate the expression for $C(w)$, and we want $C(w)$ to be as small as possible; a smaller $C(w)$ means the model is less sensitive to input perturbations and has better generalization.

Neural Networks#

Here, we analyze specific neural networks to observe when they satisfy the L-constraint.

For simplicity, let’s consider a single-layer fully connected network $f(Wx+b)$, where $f$ is the activation function, and $W,b$ are the parameter matrix/vector. In this case, the equation above becomes:

$$ \begin{equation}\Vert f(Wx_1+b) - f(Wx_2+b)\Vert\leq C(W,b)\cdot \Vert x_1 - x_2 \Vert\end{equation} $$If $x_1,x_2$ are sufficiently close, then the left side can be approximated by a first-order term, yielding:

$$ \begin{equation}\left\Vert \frac{\partial f}{\partial y}W(x_1 - x_2)\right\Vert\leq C(W,b)\cdot \Vert x_1 - x_2 \Vert\end{equation} $$Here $y=Wx_2 + b$. Obviously, for the left side not to exceed the right side, the absolute value of the term $\partial f / \partial y$ (each element) must not exceed some constant. This requires us to use activation functions whose ‘derivatives have upper and lower bounds’. However, the activation functions we commonly use, such as sigmoid, tanh, ReLU, etc., all satisfy this condition. Assuming the gradient of the activation function is bounded, especially for the commonly used ReLU activation function where this bound is 1, the term $\partial f / \partial y$ only introduces a constant. We temporarily ignore it. What remains is for us to consider only $\Vert W(x_1 - x_2)\Vert$.

Multi-layer neural networks can be analyzed recursively step-by-step, ultimately reducing to a single-layer neural network problem. And structures like CNNs and RNNs are essentially special forms of fully connected networks, so the results for fully connected networks can still be applied. Therefore, for neural networks, the problem becomes: if

$$ \begin{equation}\Vert W(x_1 - x_2)\Vert\leq C\Vert x_1 - x_2 \Vert\end{equation} $$always holds, what can be the value of $C$? After finding the expression for $C$, we can hope for $C$ to be as small as possible, thereby introducing a regularization term $C^2$ for the parameters.

Matrix Norms#

Definition#

In fact, at this point, we have transformed the problem into a matrix norm problem (the role of a matrix norm is equivalent to the magnitude of a vector), which is defined as:

$$ \begin{equation}\Vert W\Vert_2 = \max_{x\neq 0}\frac{\Vert Wx\Vert}{\Vert x\Vert}\end{equation} $$If $W$ is a square matrix, this norm is also called the “spectral norm”, “spectral radius”, etc. In this article, even if it’s not a square matrix, we will simply refer to it as “spectral norm”. Note that $\Vert Wx\Vert$ and $\Vert x\Vert$ both refer to vector norms, which are ordinary vector magnitudes. The matrix norm on the left side was not explicitly defined originally, but it is defined by the limit of the vector model on the right, so such matrix norms are called “matrix norms induced by vector norms”.

Alright, let’s not dwell on fancy concepts. With the concept of vector norms, we have:

$$ \begin{equation}\Vert W(x_1 - x_2)\Vert\leq \Vert W\Vert_2\cdot\Vert x_1 - x_2 \Vert\end{equation} $$Uh, actually, we haven’t done much; we just changed the notation. We still haven’t figured out what $\Vert W\Vert_2$ equals.

Frobenius Norm#

In fact, the precise concept and calculation method of the spectral norm $\Vert W\Vert_2$ still require a good deal of linear algebra concepts. We won’t delve into it for now. Instead, let’s first study a simpler norm: the Frobenius norm, or F-norm for short.

The name might sound intimidating, but its definition is quite simple:

$$ \begin{equation}\Vert W\Vert_F = \sqrt{\sum_{i,j}w_{ij}^2}\end{equation} $$In plain terms, it simply treats the matrix as a vector and then calculates the Euclidean magnitude of that vector.

Simply by using the Cauchy-Schwarz inequality, we can prove that

$$ \begin{equation}\Vert Wx\Vert\leq \Vert W\Vert_F\cdot\Vert x \Vert\end{equation} $$Evidently, $\Vert W\Vert_F$ provides an upper bound for $\Vert W\Vert_2$. That is to say, you can understand $\Vert W\Vert_2$ as the most accurate $C$ in the equation $\Vert W(x_1 - x_2)\Vert\leq C\Vert x_1 - x_2 \Vert$ (the smallest $C$ among all $C$ satisfying that equation). However, if you are not overly concerned with precision, you can directly choose $C=\Vert W\Vert_F$, which also makes that equation hold, as $\Vert W\Vert_F$ is easy to calculate.

L2 Regularization Term#

As mentioned earlier, to make the neural network satisfy the L-constraint as well as possible, we should hope for $C=\Vert W\Vert_2$ to be as small as possible. We can add $C^2$ as a regularization term to the loss function. Of course, we haven’t calculated the spectral norm $\Vert W\Vert_2$ yet, but we have calculated a larger upper bound, $\Vert W\Vert_F$. Let’s use that for now, so the loss is:

$$ \begin{equation}loss = loss(y, f_w(x)) + \lambda \Vert W\Vert_F^2\end{equation} $$The first part refers to the model’s original loss. Let’s revisit the expression for $\Vert W\Vert_F$. We find that the added regularization term is:

$$ \begin{equation}\lambda\left(\sum_{i,j}w_{ij}^2\right)\end{equation} $$Isn’t this just L2 regularization?

Finally, after some tinkering, we got a payoff: we revealed the connection between L2 regularization (also known as weight decay) and the L-constraint, indicating that L2 regularization enables the model to better satisfy the L-constraint, thereby reducing the model’s sensitivity to input perturbations and enhancing its generalization performance.

Spectral Norm#

Principal Eigenvalue#

In this section, we formally address the spectral norm $\Vert W\Vert_2$. This is a topic in linear algebra and is relatively theoretical.

In fact, the spectral norm $\Vert W\Vert_2$ is equal to the square root of the largest eigenvalue (principal eigenvalue) of $W^{\top}W$. If $W$ is a square matrix, then $\Vert W\Vert_2$ is equal to the absolute value of the largest eigenvalue of $W$.

$$ \Vert W\Vert_2^2 = \max_{x\neq 0}\frac{x^{\top}W^{\top} Wx}{x^{\top} x} = \max_{\Vert x\Vert=1}x^{\top}W^{\top} Wx $$Note: For readers interested in the theoretical proof, here’s a brief outline of the proof’s idea. According to the definition of $\Vert W\Vert_2$, we have:

Assume $W^{\top} W$ is diagonalizable into $\text{diag}(\lambda_1,\dots,\lambda_n)$, i.e., $W^{\top} W=U^{\top}\text{diag}(\lambda_1,\dots,\lambda_n)U$, where all $\lambda_i$ are its eigenvalues and are non-negative, and $U$ is an orthogonal matrix. Since the product of an orthogonal matrix and a unit vector is still a unit vector, then:

$$ \begin{aligned}\Vert W\Vert_2^2 =& \max_{\Vert x\Vert=1}x^{\top}\text{diag}(\lambda_1,\dots,\lambda_n) x \

=& \max_{\Vert x\Vert=1} \lambda_1 x_1^2 + \dots + \lambda_n x_n^2\ \leq & \max{\lambda_1,\dots,\lambda_n} (x_1^2 + \dots + x_n^2)\quad(\text{Note that }\Vert x\Vert=1)\ =&\max{\lambda_1,\dots,\lambda_n}\end{aligned} $$

Thus, $\Vert W\Vert_2^2$ is equal to the largest eigenvalue of $W^{\top} W$.

Power Iteration#

Perhaps some readers are getting impatient: who cares if it’s equal to an eigenvalue? I’m concerned about how to calculate this pesky norm!!

In fact, while the previous content might seem confusing, it forms the basis for calculating $\Vert W\Vert_2$. The previous section told us that $\Vert W\Vert_2^2$ is the largest eigenvalue of $W^{\top}W$. So the problem becomes finding the largest eigenvalue of $W^{\top}W$, which can be solved by the Power Iteration method.

The so-called “power iteration” is an iterative scheme as follows:

$$ \begin{equation}u \leftarrow \frac{(W^{\top}W)u}{\Vert (W^{\top}W)u\Vert}\end{equation} $$After several iterations, we finally obtain the norm (i.e., an approximation of the largest eigenvalue) by:

$$ \begin{equation}\Vert W\Vert_2^2\approx u^{\top}W^{\top}Wu\end{equation} $$Alternatively, it can be equivalently rewritten as:

$$ \begin{equation}v\leftarrow \frac{W^{\top}u}{\Vert W^{\top}u\Vert},\,u\leftarrow \frac{Wv}{\Vert Wv\Vert},\quad \Vert W\Vert_2 \approx u^{\top}Wv\end{equation} $$Thus, after initializing $u,v$ (e.g., with all-ones vectors), one can iterate several times to obtain $u,v$, and then substitute them into $u^{\top}Wv$ to calculate an approximate value of $\Vert W\Vert_2$.

$$ u^{(0)} = c_1 \eta_1 + \dots + c_n \eta_n $$Note: For readers interested in the proof, here’s a simple one demonstrating why this iteration works.

Let $A=W^{\top}W$, initialized as $u^{(0)}$. Assume also that $A$ is diagonalizable, and that among its eigenvalues $\lambda_1,\dots,\lambda_n$, the largest eigenvalue is strictly greater than the others (if this condition is not met, it means the largest eigenvalue is a repeated root, which makes the discussion a bit more complex; readers should consult professional proofs for that, this is merely an introduction. Of course, from a numerical computation perspective, almost no two numbers are perfectly equal, so repeated roots are unlikely to occur in experiments.). Then the eigenvectors $\eta_1,\dots,\eta_n$ of $A$ form a complete basis, so we can set:

$$ A^r u^{(0)} = c_1 A^r \eta_1 + \dots + c_n A^r \eta_n $$Each iteration is $Au/\Vert Au\Vert$. The denominator only changes the magnitude, which we can apply at the very end; let’s just look at the repeated application of $A$:

$$ A^r u^{(0)} = c_1 \lambda_1^r \eta_1 + \dots + c_n \lambda_n^r \eta_n $$Note that for an eigenvector, $A\eta = \lambda \eta$, thus:

$$ \frac{A^r u^{(0)}}{\lambda_1^r} = c_1 \eta_1 + c_2 \left(\frac{\lambda_2}{\lambda_1}\right)^r \eta_2 + \dots + c_n \left(\frac{\lambda_n}{\lambda_1}\right)^r \eta_n $$Without loss of generality, let $\lambda_1$ be the largest eigenvalue. Then:

$$ \frac{A^r u^{(0)}}{\lambda_1^r} \approx c_1 \eta_1 $$According to the assumption, $\lambda_2/\lambda_1,\dots,\lambda_n /\lambda_1$ are all less than 1, so as $r\to\infty$, they all tend to zero, or rather, they can be ignored when $r$ is sufficiently large. Thus, we have:

$$ Au=\lambda_1 u $$Ignoring the magnitude for now, this result indicates that when $r$ is sufficiently large, $A^r u^{(0)}$ provides an approximate direction for the eigenvector corresponding to the largest eigenvalue. In fact, the normalization at each step is only to prevent overflow. This way, $u = A^r u^{(0)}/\Vert A^r u^{(0)}\Vert$ is the corresponding unit eigenvector, i.e.:

$$ u^{\top}Au=\lambda_1 u^{\top}u=\lambda_1 $$Therefore:

This calculates the square of the spectral norm.

Spectral Regularization#

Earlier, we showed the relationship between the Frobenius norm and L2 regularization. We also explained that the Frobenius norm is a stronger (or coarser) condition, and the more accurate norm should be the spectral norm. Although the spectral norm is not as easy to compute as the Frobenius norm, it can still be approximated by iterating a few steps using the equation above.

Therefore, we can propose the concept of “Spectral Norm Regularization”, which involves adding the square of the spectral norm as an additional regularization term, replacing the simple L2 regularization term. That is, the equation above becomes:

$$ \begin{equation}loss = loss(y, f_w(x)) + \lambda \Vert W\Vert_2^2\end{equation} $$The paper 《Spectral Norm Regularization for Improving the Generalizability of Deep Learning》 has already conducted multiple experiments, showing that ‘spectral regularization’ can improve model performance on various tasks.

In Keras, the spectral norm can be calculated using the following code:

| |

Generative Models#

WGAN#

If, in ordinary supervised training models, the L-constraint merely serves as “icing on the cake”, then in the discriminator of WGAN, the L-constraint is an indispensable key step. This is because the optimization objective for WGAN’s discriminator is:

$$ \begin{equation}W(P_r,P_g)=\sup_{|f|_L = 1}\mathbb{E}_{x\sim P_r}[f(x)] - \mathbb{E}_{x\sim P_g}[f(x)]\end{equation} $$Here $P_r,P_g$ are the real distribution and the generated distribution, respectively. $|f|_L = 1$ means satisfying the specific L-constraint $|f(x_1) - f(x_2)| \leq \Vert x_1 - x_2\Vert$ (where $C=1$). Thus, the above objective means that among all functions satisfying this L-constraint, the one that maximizes $\mathbb{E}_{x\sim P_r}[f(x)] - \mathbb{E}_{x\sim P_g}[f(x)]$ is the most ideal discriminator. Written in the form of a loss, it is:

$$ \begin{equation}\min_{|f|_L = 1} \mathbb{E}_{x\sim P_g}[f(x)] - \mathbb{E}_{x\sim P_r}[f(x)]\end{equation} $$Gradient Penalty#

Currently, a relatively effective solution is the gradient penalty. That is, $\Vert f'(x)\Vert = 1$ is a sufficient condition for $|f|_L = 1$. So, I add this term to the discriminator’s loss as a penalty term, i.e.:

$$ \begin{equation}\min_{f} \mathbb{E}_{x\sim P_g}[f(x)] - \mathbb{E}_{x\sim P_r}[f(x)] + \lambda (\Vert f'(x_{inter})\Vert-1)^2\end{equation} $$In fact, I think adding $relu(x)=\max(x,0)$ would be better:

$$ \begin{equation}\min_{f} \mathbb{E}_{x\sim P_g}[f(x)] - \mathbb{E}_{x\sim P_r}[f(x)] + \lambda \max(\Vert f'(x_{inter})\Vert-1, 0)^2\end{equation} $$where $x_{inter}$ is obtained through random interpolation:

$$ \begin{equation}\begin{aligned}&x_{inter} = \varepsilon x_{real} + (1 - \varepsilon) x_{fake}\\ &\varepsilon\sim U[0,1],\quad x_{real}\sim P_r,\quad x_{fake}\sim P_g \end{aligned}\end{equation} $$Gradient penalty cannot guarantee $\Vert f'(x)\Vert = 1$, but intuitively it fluctuates around 1, so $|f|_L$ theoretically also fluctuates around 1, thereby approximately achieving the L-constraint.

This method works quite well in many situations, but it performs poorly when the number of classes in real samples is large (especially for conditional generation). The problem lies in random interpolation: in principle, the L-constraint must be satisfied across the entire space. However, gradient penalty via linear interpolation can only guarantee satisfaction within a small localized region. If this small region happens to be roughly the space between real and generated samples, then it might just suffice. But if there are many classes and interpolation occurs between different classes, it often leads to points being interpolated into unknown regions where the L-condition should be met but isn’t, causing the discriminator to fail.

Thought: Can gradient penalty be directly used as a regularization term for supervised models? Interested readers can experiment with it~

Spectral Normalization#

The problem with gradient penalty is that it’s just a penalty and only works locally. The truly ingenious solution is a constructive method: build a special $f$ such that $f$ satisfies the L-constraint regardless of its parameters.

In fact, when WGAN was first proposed, it used weight clipping—clipping all parameter absolute values to not exceed a certain constant. This ensured that the Frobenius norm of the parameters would not exceed a certain constant, and thus $|f|_L$ would not exceed a certain constant. Although it didn’t precisely achieve $|f|_L=1$, this only scaled the loss by a constant factor, hence not affecting the optimization result. Weight clipping is a constructive method, but this particular constructive method is not friendly to optimization.

From a simple perspective, this clipping method has a large optimization space. For example, changing it to clipping the Frobenius norm of all parameters to not exceed a certain constant would offer more model flexibility than direct weight clipping. If clipping feels too aggressive, one could instead use a parameter penalty, i.e., imposing a large penalty on all parameters whose norms exceed the Frobenius norm. I have experimented with this, and it is generally effective, but the convergence speed is relatively slow.

However, all the above methods are just approximations. Now that we have the spectral norm, we can use the most precise solution: replace all parameters $w$ in $f$ with $w/\Vert w\Vert_2$. This is Spectral Normalization, proposed and experimented with in the paper 《Spectral Normalization for Generative Adversarial Networks》. This way, if the absolute value of the derivative of the activation function used in $f$ does not exceed 1, then we have $|f|_L\leq 1$, thus achieving the required L-constraint with the most precise method.

Note: “The absolute value of the derivative of the activation function does not exceed 1” is usually satisfied. However, if the discriminator model uses a residual connection, the activation function would effectively be $x + relu(Wx+b)$, and in this case, its derivative might not necessarily be less than or equal to 1. Nevertheless, it will not exceed a constant, so it does not affect the optimization result.

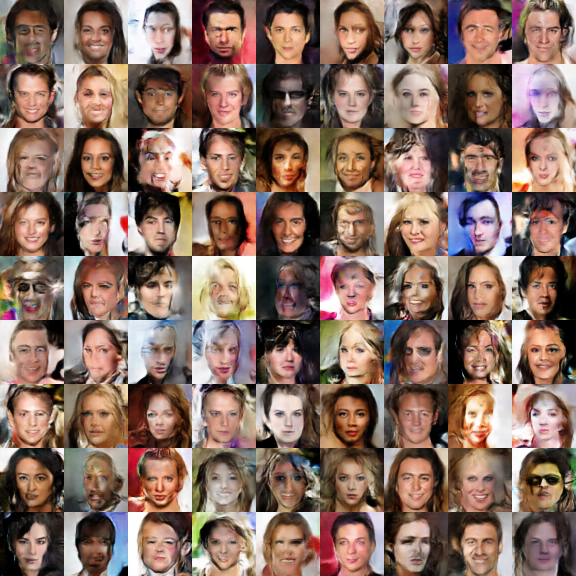

I have personally tried using spectral normalization in WGAN (without adding gradient penalty, see reference code below), and found that the final convergence speed (epochs required to achieve the same effect) is faster than WGAN-GP, and the results are even better. Moreover, another factor affecting speed is the runtime per epoch: gradient penalty takes longer than spectral normalization. This is because with gradient penalty, it’s equivalent to calculating second-order gradients during gradient descent, requiring two full forward passes, thus making it slower.

Keras Implementation#

In Keras, implementing spectral normalization can be both simple and not so simple.

It’s simple because you just need to pass the kernel_constraint parameter to every convolutional and fully connected layer in the discriminator, and the gamma_constraint parameter to BN layers. The constraint is written as:

| |

Reference code: https://github.com/bojone/gan/blob/master/keras/wgan_sn_celeba.py

It’s not so simple because the kernel_constraint in the current Keras (version 2.2.4) does not actually change the kernel; it only adjusts the kernel values after gradient descent. This is different from how spectral normalization is applied in the paper. If used in this way, you’ll find that gradients become inaccurate in later stages, leading to poor generation quality from the model. To truly modify the kernel, we either need to redefine all layers (convolutional, fully connected, BN, and all layers involving matrix multiplication) or modify the source code. This involves changing the add_weight method of the Layer object in keras/engine/base_layer.py, which originally was (starting at line 222 currently):

| |

Modify it to:

| |

That is, change constraint in K.variable to None, and execute the constraint at the end~ Note, don’t immediately complain about Keras being too rigidly encapsulated or inflexible just because you see that source code modification is needed. If you were to use other frameworks, it would generally be many times more complex than Keras (relative to the amount of change for GANs without spectral normalization).

(Update: A new implementation method that doesn’t require modifying the source code can be found here.)

Summary (formatted)#

This article is a summary on Lipschitz constraints, mainly introducing how to make models better satisfy Lipschitz constraints, which relates to the model’s generalization ability. The concept that is comparatively more difficult is the spectral norm, which involves a good deal of theory and formulas.

Overall, the content related to spectral norms is quite ingenious, and the related conclusions further indicate that linear algebra is closely intertwined with machine learning; many “profound” linear algebra concepts can find corresponding applications in machine learning.

| |